Digital Actors have been a research topic at Filmakademie Baden-Württemberg’s Animationsinstitut, and Volker Helzle has been a chief researcher at the Filmakademie for many years.

In particular, Helzle and his team have focused on tool development for believable facial animation, using a reference to one of the greatest minds of the ages, Albert Einstein. This homage to the physicist and humanist investigates how documentary film formats can extend their horizon by meaningful inclusion of these digital actors.

“As most digital actor projects are related to high-end and heavy budget productions, we wanted to demonstrate how our tools can enable small production teams with limited budgets to realize a believable digital actor,” Helzle explains. “In our exemplary production, we estimated the digital asset creation with 85 working days and 80 working days for each of the three episodes. Another goal was to build and release a reference for others to gain insight in the creation process.”

Facial Animation Toolset

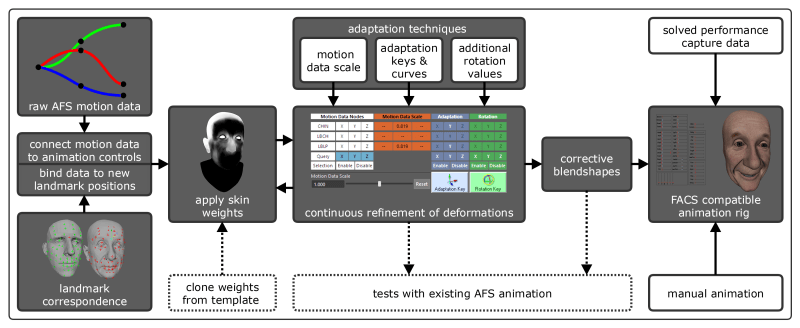

The Filmakademie team used the Facial Animation Toolset, a collection of extensions for Autodesk Maya to speed up the rigging and deformation process. Key to the approach is the Adaptable Facial Setup (AFS). AFS uses motion data, and a data library derived from an extensive facial motion capture session, to apply generalized muscle group movements. After the initial recording, all data was cleaned, stabilised and further refined to reflect the basic movement of the facial muscle groups. This data is very dense and comes with a certain characteristic in respect to the activation of muscle groups. The AFS tool is designed to maintain this characteristic while allowing adaption to the physiognomies of the specific face. This is a manual process that involves a skilled rigger.

“Rigging the Einstein character’s face took about a week,” says Helzle. “This approach is very effective in terms of computation time. We could achieve interactive frame rates within Maya, which is not common for a rig of this complexity.”

The big advantage of the AFS approach is what is called non-linearity. Compared to a combination of individual but static Blend Shapes, it consists of much more deformation information. The results were discussed in a Paper at SIGGRAPH 2018 in Vancouver, CANADA.

Curriculum application

The tools and workflows developed by the Einstein project team are subject to workshops with the Filmakademie students. Evaluating a new tool with the feedback of animation students turned out to be very effective. The Technical Director (TD) students are more involved in the development and research aspect. There is a 30-month postgraduate Technical Director course at Filmakademie’s Animationsinstitut; open to applicants worldwide, but with English proficiency required. There is a relatively small tuition fee for non-EU citizens.

For more information, go HERE:

MoCap versus Rotomation

This was an interesting journey for the Filmakademie team. They all wanted to go for Motion Capture in the beginning, but in the end they chose all hand-animated rotomation. “On set, we tried to avoid a head-mounted camera,” explains Volker Helzle, “since we definitely did not want to deal with retouching a bulky head rig occluding hair and the background. We evaluated a commercial solution for solving the MoCap data to FACS (Facial Action Coding System) parameters from the principal camera view. It worked in principle but not really for the subtleties. There was noise and some misinterpreted actions due to the lack of additional witness cameras or higher-resolution footage. While we evaluated the solving process, we had our skilled animators do the first pass, by hand. The results were much more promising doing it manually, than the MoCap to FACS solving process so we stayed with this workflow.”

Shoot day

This was quite a traditional shoot and the crew concentrated on directing, framing and lighting. “Yet, on set, we didn’t have the capabilities to review how the CG Einstein mask would fit into the scenario,” Helzle admits. “On location, we set up an Arri Alexa camera on a dolly and had a basic three-point lighting kit. The space on set was extremely limited and we had only one set shooting day, so the equipment had to be minimal.” Reference photos were taken for each shot and they used spherical HDRI light probes. The shots were fine-tuned with additional digital lighting effects to match the real conditions.

Face Tools

The team collected about 30 reference photographs of Einstein. From those, one was selected as the key, and a professional sculptor created a cast model which was then 3D scanned with photogrammetry at the Disney Research laboratories in Zurich.

Sculpting and texturing was performed in ZBrush and from that, the digital model mesh topology was rebuilt and further refined. “For some signature expressions, dedicated displacement maps were created in ZBrush and triggered by the AFS tool,” he added. “The model has all the anatomical components of a human head. Starting with the skull, teeth, the tongue with a rig, and a sophisticated eye model including a tear layer. The skull was used for a muscle and skin sliding simulation which prevented unwanted deformation in the forehead and jaw area. For animation and rigging we used Autodesk Maya. For look-dev and rendering V-Ray was used. Compositing was done in Nuke with the help of Keen Tools for geometry tracking.

“The biggest challenge was certainly the mismatch of the real actor and the picture of the elderly man we’d all expect when we think of Einstein,” concedes Helzle. “The most problematic areas were the neck and shoulders. We had to work a lot with warp-deformations in Nuke to compensate for this. Though this physical mismatch constituted an additional challenge the performance and voice interpretation of the actor did exceed our expectations.”

Lessons Learned

“We definitely learned a lot,” he summarised. “Although some of the team had worked on earlier digital actor projects and were familiar with the rigging and animation process, the whole shoot and capturing part was an entirely new challenge. As mentioned earlier, we could not use the facial marker data due to a lack of resolution. In any future project, we would pay attention to an ideal coverage with witness cameras or even mount an optical motion capture system that could deliver us the 3D motion data immediately. This would speed up the production and allow us to introduce automatization to some degree, at the same time also simplifying the cumbersome match-move process. Another possibility would be to split the shoot into two parts. One pass with the actor on location with an emphasis on camera, lighting, gesture and head tracking, a second pass with the actor in a controlled studio environment capturing the facial performance with a head-mounted camera. However, this may introduce further inconsistencies.

“Additionally, the team on location often had to guess whether camera, lighting and the actor’s performance would be applicable as the basis for the digital face replacement. Being somehow clueless on the film set is harmful to the creative process and makes it impossible to come to well-founded decisions. Luckily, we have been working on virtual production tools and real-time previsualization for years and the next logical step would be to integrate this know-how for digital actor projects. Using real-time capturing for camera and facial performance together with a fast rig and immediate retargeting would empower us to present a first rough, but certainly helpful composite to the decision-makers on the film set. Of course, big productions have already laid the foundation for such real-time preview, but our aim would again be to democratize this technique and develop solutions for inexpensive and available software and hardware.”

Despite the trial-and-error shoot, the Filmakademie team were satisfied with the content creation and shot production. Also, they were pleased to find out that in general, the AFS approach proved to be suitable. A subsequent evaluation showed that 72% of those who participated in an online questionnaire favoured the AFS over a standard blend-shape rig.

“With all the buzz about machine learning and face swap, we are still missing tools that can aid an artist-driven approach,” Volker Helzle sums it up. “How can we use machine learning to achieve creative control while maintaining parts of established creation pipelines? These are the topics of further investigation and will very likely be subject of new releases of the Facial Animation Toolset.”

As such, the Einstein Rig is released under Creative Commons on our website, HERE:

Related links: